Since the release of ChatGPT in late 2022, many believed AI would become the ultimate equalizer like the internet once did. Anyone with access could suddenly become more productive, more capable, and more competitive.

That narrative is starting to collapse.

A new reality is emerging: AI is not cheap, and “all-you-can-eat” access is dying.

At first glance, AI seems increasingly affordable. Token prices have dropped dramatically. So why are companies tightening access, introducing credits, and experimenting with ads?

Users are consuming far more AI than before, the workloads are becoming more complex (multi-step agents, long-running tasks) and the infrastructure costs scale non-linearly. AI companies are losing money, even on paying users .

AI has two phases:

- Training is done once, offline, in controlled environments

- Inference happens every time you use the model

Most people assume training is the expensive part but it’s not. Inference is the real cost center.

- Training GPT-4 reportedly cost about $150M

- Serving it (inference) has cost billions

Even optimized setups still struggle economically, because:

- Inference runs at scale (millions of users, real-time)

- Requires massive memory bandwidth (not just GPUs)

- Must be low-latency and always available

Why Your “Unlimited Plan” Is About to Disappear

The “buffet model” of AI paying a flat fee and use as much as you want, is fundamentally broken and being replaced by consumption-based pricing, similar to: electricity, water or cloud compute.

The pattern is consistent: Base plan -> limited usage -> expensive overages

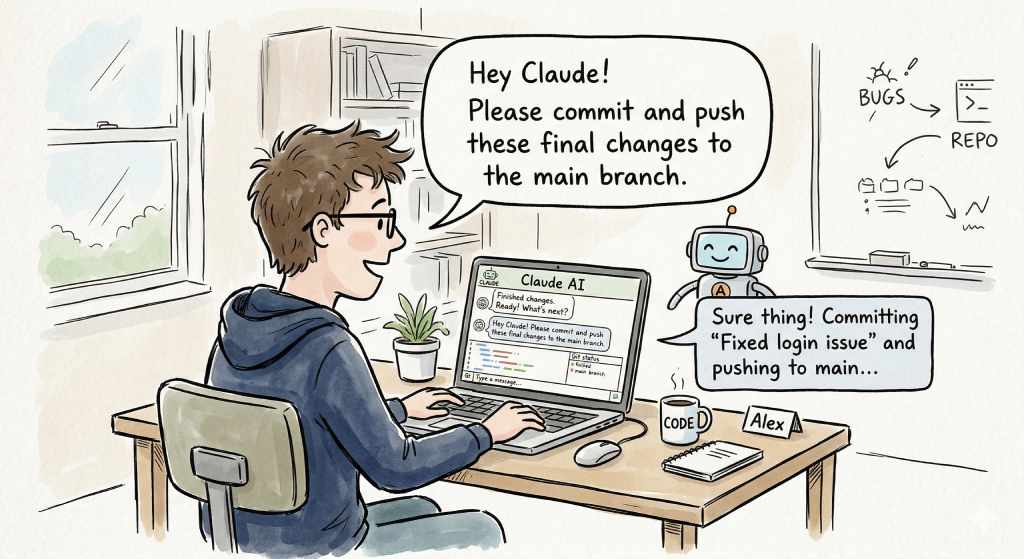

Simple prompts are cheap, but when you ask an AI to search emails or navigate systems, you’re triggering long-running inference loops that consume massive token volumes.

Some agents can run for hours on a single task and that changes everything. AI is no longer a query tool. It’s becoming a continuous compute workload.

Another hidden constraint is memory. Training is GPU-heavy but inference is memory-heavy. AI models must sit in RAM to serve responses quickly. (not consumer RAM, specialized, high-bandwidth memory).

AI as a Salary Component

One of the most interesting emerging trends is AI tokens as compensation

In Silicon Valley, companies are exploring giving employees: salary + equity + AI compute credits

The AI is now a core productivity multiplier and access to compute means ability to produce value.

Some industry voices (like Nvidia’s CEO) suggest that high-paid engineers should be consuming hundreds of thousands of dollars in AI tokens annually .

This reframes AI not as a tool or subscription but as a resource allocated like budget.

The End of Lazy Prompting

This shift has a cultural implication. When tokens are cheap, people spam prompts. When they cost money you will plan first, design workflows and optimize your prompts (remember the “prompt engineering”)

This new paradigm has two sides:

The downside

- Advanced AI becomes gated by budget

- Smaller teams may be outpaced

- The “AI equalizer” narrative weakens

The upside

- Forces disciplined usage

- Rewards structured thinking

- Eliminates low-skill, high-noise workflows

AI becomes less of a toy and more of a serious production resource.

We are entering a phase where AI is metered and the key question is no longer “What can AI do?” but “Is this worth the tokens?”

The buffet is over.