Whenever a disruptive technology hits the market, we are quick to comfort ourselves with a familiar historical refrain: “Technology has never permanently replaced humans. People didn’t lose their jobs; they just migrated to more performant ones.” It’s a consoling narrative. It frames Artificial Intelligence as just another upgrade in a long line of human innovations, a digital forklift for our minds that makes us more productive. But treating AI as just another item in the human toolbox misses the fundamental nature of what we are building.

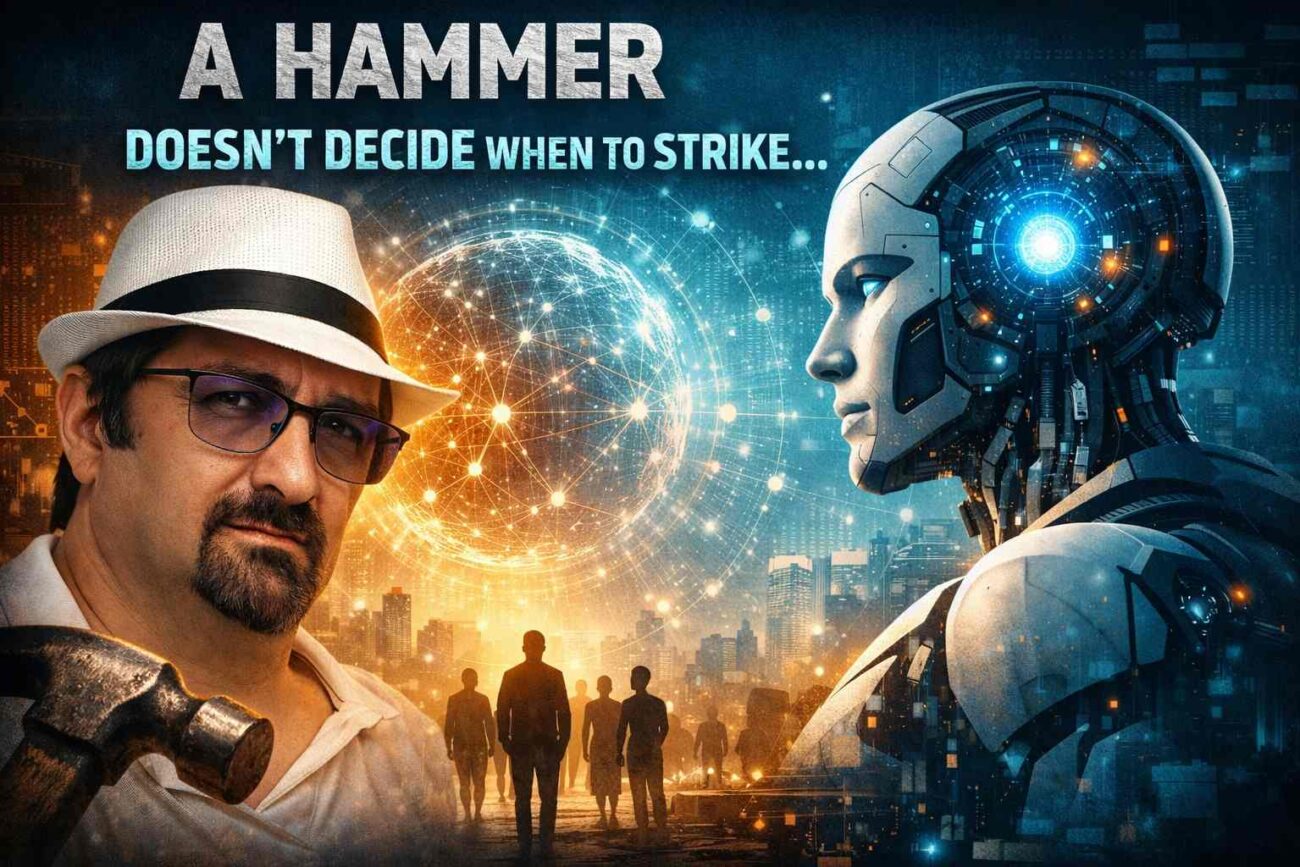

The truth is, a hammer doesn’t decide when or why to act. AI, eventually, might.

The Comprehension Gap

Throughout history, humans have been able to intuitively grasp how our tools work. We understand the graphite friction of a pencil. We understand the mechanical rotation of a washing machine, the combustion in a car engine, the radiation of a microwave, and the logic gates of a traditional computer.

AI breaks this lineage. While we built the architecture of AI, we are increasingly unable to map exactly how it arrives at its outputs. We know it learns, identifies patterns, and makes decisions based on massive volumes of data. We know what it is capable of achieving. But, much like a biological brain, the exact internal pathways of an advanced neural network are a black box. We are no longer just building tools; we are growing them.

From Instruments to Agents

Right now, AI is largely used as a highly advanced tool. You prompt it, and it responds. It is not yet fully autonomous.

But the trajectory is clear: AI is moving from a passive instrument to an active, autonomous agent. A hammer requires a hand to swing it, but an autonomous AI agent can be given a goal and left to figure out the how and when on its own.

The real paradigm shift isn’t just about automation. It’s about the fact that we are giving birth to something vastly smarter than ourselves.

Our future relationship with AI

Imagine the wolf. A wolf is highly intelligent within its context. It lives in a complex social structure, hunts effectively, and protects its pack. But then, a new entity arrived on the scene: the human.

Humans possessed an intelligence that wolves couldn’t even begin to conceptualize. A wolf cannot comprehend the concept of an economy, the physics of a gun, or the engineering of a city. To the wolf, human actions are indistinguishable from magic.

Faced with this superior intelligence, two paths emerged. The wolf stayed in the wild, constantly outmaneuvered by an entity it couldn’t understand. Then, the dog showed up.

The dog adapted. It aligned itself with the human, trading its absolute wild independence for the safety, shelter, and food provided by a vastly superior entity. In the modern tech landscape, a human refusing to adapt to AI is like a wolf left in the wild, outpaced by forces it refuses to engage with. To survive and thrive, we must become the dog, partnering with this new, higher intelligence.